Oral Exams: The Perfect AI-Proof Assessment Method

See why the oral exam is gaining ground in higher ed. Use Edvisor’s oral defense feature to reduce AI misuse and assess real student understanding.

Oral exams are one of the most effective assessment methods in the age of AI. They're reemerging as the go-to method for instructors to check student understanding in real time and probe their reasoning. It makes it much harder for students to rely on Generative AI; evaluating analysis, communication, and critical thinking is much easier.

Due to growing concerns about cheating, the importance of oral exams has increased. Professors are starting to trust spoken formats over take-home written work, which can be completed with AI tools.

What Is an Oral Exam?

An oral examination requires students to articulate their reasoning verbally in response to questions from an instructor or panel, surfacing the cognitive process behind an answer rather than the answer alone. These verbal exams can be conducted in both academic and professional settings. Other commonly used terms for them are viva, viva voce, or oral test.

Oral assessments are especially valuable because they go beyond simple recall. In order to succeed in their professional lives, students need to build subject expertise and articulate confidently. So the best way for an instructor to check these skills is through interactive oral assessments.

This growing interest in oral exams is also shaping course design. Catherine Hartmann's 2025 article in College Teaching documents one such redesign: in an upper-level humanities course, she rebuilt the syllabus around backward design, integrated social annotation, and used regular cold-calling as a scaffolded preparation for a final oral examination. Her case study illustrates a broader pattern. Faculty are not bolting oral exams onto existing courses; they are restructuring courses around the format. Read more here.

Types of Oral Exams

Oral examinations take several forms, each suited to different disciplinary and pedagogical aims

- Guided conversations: The assessor asks oral questions while giving the student space. This means that the student can be asked to expand on the answer or clarify their reasoning.

- Presentations: A common example of this is the thesis defense. A student presents their work and justifies the ideas and decisions when asked about it.

- Practical exams: Used widely in medicine and practice-based fields, this oral assessment asks students to talk through their work. This could be patient cases, diagnoses, or other practical scenarios.

- Simulations: Students show their prowess in designed professional situations. So it could be a role-play counselling session or a business consultation.

- Follow up after a written exam: This is a good way of testing a student's reasoning after they submit a written test. The examiner conducts a short verbal discussion to explore how well they can clarify their answers.

Things to Consider Before Conducting Oral Exams

Oral exams need to be designed carefully. Higher education institutions need to keep in mind the diverse needs of students before testing them. Language barrier, speech anxiety, and neurodivergence are all some of the cases where students’ performance in oral exams might be affected. Therefore, instead of measuring the fluency or speaking speed of a student, a professor should measure true understanding. Besides making the tests accessible for all students, institutions should also consider the following factors:

- Faculty often need more time to build strong questions, run the exams, and manage the whole assessment process. That extra work can feel a bit heavy, especially when one instructor has to review responses and give useful feedback to a large group of students.

- This is one reason institutions sometimes hold back from using oral exams more often. The format can be very useful, yet it increases faculty workload.

- Faculty training needs to be conducted on how to ask follow-up questions consistently without personal bias. To offer support for diverse student needs, approved accommodations (like language support for ESL learners) should also be implemented.

- Edvisor can help reduce some of this workload for faculty. Professors can easily set up oral exams with clear rubrics that are aligned with their learning outcomes. Edvisor also allows students to respond in any language. So the oral assessment becomes more inclusive, AI-resilient, and still academically rigorous.

The Limits of Traditional Written Assessment

Traditional written assessments produced reliable signals about student understanding when completion required productive struggle. Generative AI has weakened that signal. A correctly answered take-home assignment no longer demonstrates that the student worked through the underlying reasoning, which has implications for course-level diagnostic accuracy and for the credibility of the grade or degree itself. Many leading institutions are responding not by abandoning written work but by pairing it with formats AI cannot complete on the student's behalf.

Shifting Towards In-Person Exam

Three lines of evidence support the shift. First, institutional adoption: the University of Pennsylvania has paired spoken exams with written papers, citing both academic integrity and the development of critical thinking and creativity. Second, large-sample empirical work: Droulers, Krautloher, and Shaeri (2026), publishing in Teaching in Higher Education, surveyed 290 students across 25 subjects in a three-year project at an Australian institution. Their finding: interactive oral assessments combine formative and summative functions effectively, reduce academic dishonesty, and increase student confidence. Third, evidence on outcomes: Sotiriadou and colleagues (2020) found that authentic assessments connected to real-world tasks reduce cheating and build employability-related skills, including professional identity formation.

Authentic assessments offer students a chance to produce genuine understanding that they will need beyond university. To make academic integrity and career-readiness a goal, professors need to redesign assessments. We've compiled a comprehensive guide on this subject to help professors in any field audit and redesign their assessments for AI resilience. If you just want a list of quick hacks to implement, download now and scroll to the last page of the guide.

Explore practical ways to make assessments AI-resilient

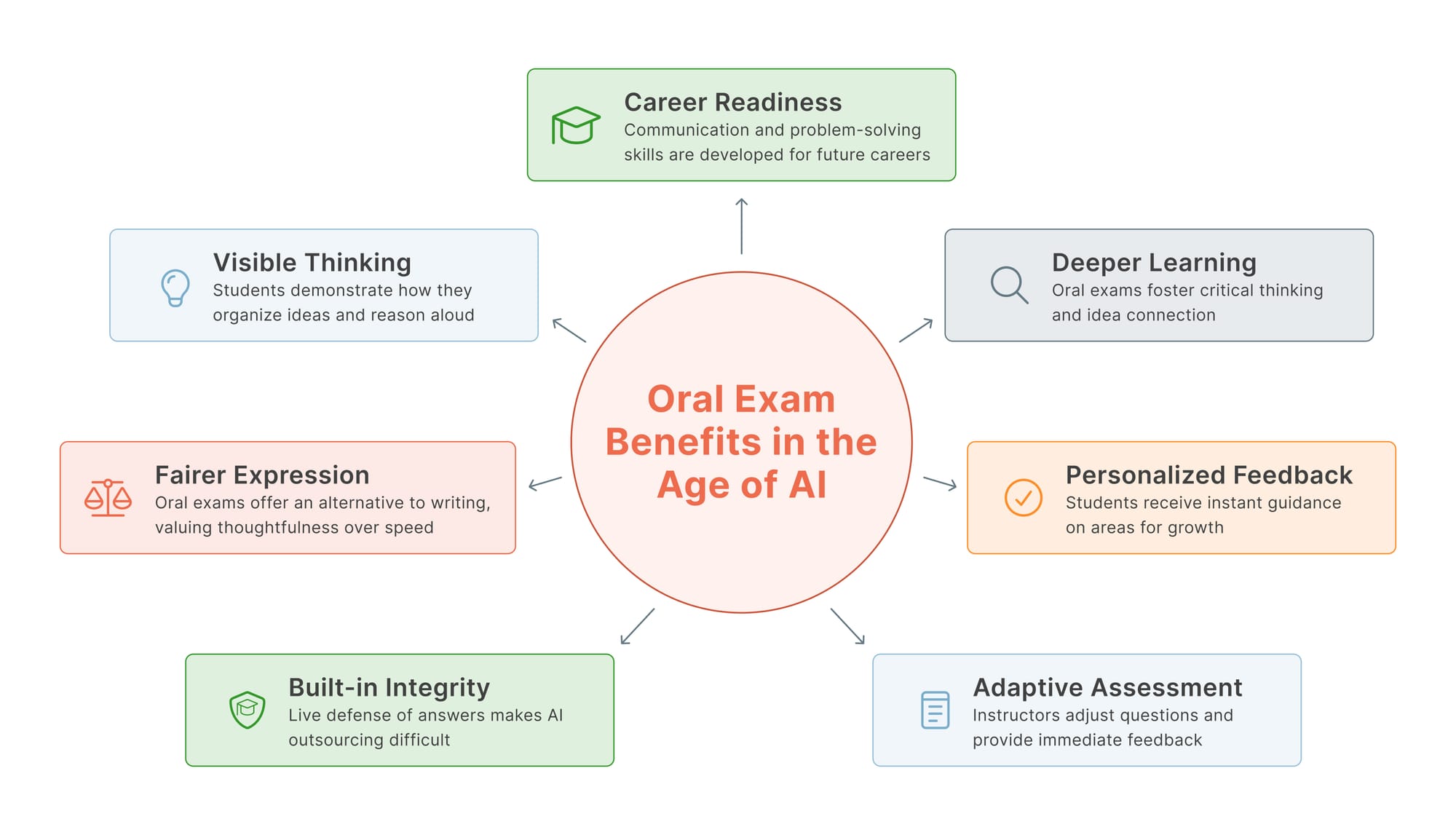

Oral Exam Benefits in the Age of AI

Oral evaluation makes learning visible and also provides opportunities for students to correct their concepts if they're wrong. Let's look at why it's a smart solution to combat the use of AI:

- An oral exam makes the assessment more meaningful as the student's thinking process is revealed in real time. Professors can see clearly how they organize ideas and work through problems. The oral exam process is much fairer and values thoughtfulness over speed. In an undergraduate engineering course at George Washington University, 58 students were examined over just two days. The format was simple: live coding and real-time explanation, proving that oral assessment can work at a typical class scale. The key takeaway from this study was that academic integrity can be built into the format itself, rather than relying only on policing AI use.

- Assessment is much more accurate when instructors can respond to students' mistakes and redirect them. It also strengthens the student-instructor connection when they receive this guidance.

- A stronger teacher-student connection develops in this form of assessment, as they receive immediate, individualized feedback. That helps each student understand where they are struggling and how to improve.

- They help students develop better critical thinking by responding to scenario-based questions in the moment. During the oral test, students take more responsibility for their claims and connect ideas across texts. This kind of authentic assessment builds a strong professional skillset that helps students perform well in the future, for instance, during job interviews. In a sample of 722 genetics students at UniSA, the introduction of interactive oral assessments was linked to significantly better final assessment performance and higher overall course grades. That means oral assessments were not just useful for protecting academic integrity. They also appear to support stronger learning outcomes over time.

- For some students, it's harder to express knowledge in written form. Oral exams can be a good alternative for them.

Scaling Oral Examination with AI Voice Agents

AI has shown incredible accuracy in conducting job interviews. Due to the lack of bias and the ability to follow a script, they can assess candidates much more quickly without getting tired. This is what inspired Professor Panos Ipeirotis to use AI voice agents for oral exams.

How an NYU Professor Created a System for Scalable Oral Examination

Panos Ipeirotis, professor at NYU's Stern School of Business, faced a coordination problem familiar to anyone who has tried to scale oral examination: how do you give every student in a class an individualized oral assessment without consuming weeks of instructor time? In a recent arXiv preprint with Konstantinos Rizakos (2026), he documents a system built on ElevenLabs voice agents, each playing a distinct role: authentication, project discussion, and case-based questioning. A council of LLMs provided initial grading; the instructor verified and adjusted. The voice agents proved stricter graders than human evaluators, and produced unusually specific feedback. Here’s an example,

"Your understanding of metric trade-offs and Goodhart's Law risks was exceptional—the hot tub example perfectly illustrated how optimizing for one metric can corrupt another."

Panos also discovered through the students’ responses their own teaching gaps. 70% of the students agreed that the oral tests were the best way to check real understanding. They accepted the assessment, but not the way it was conducted because it was too fast and didn’t give them time to think. Edvisor’s oral defense fixes this by allowing a retry for students (the submission is less strict, so that students don’t get intimidated). We’ll discover the feature in detail in the next heading.

AI tools have been emerging to make the process of oral assessment easier to conduct. The most motivating of these oral exam advantages for this is that if a student understands something, they should be able to explain it clearly. Across the country, a small but growing number of educators are beginning to experiment with oral exams. Large-scale oral assessments are not just possible. With the right digital tools, they can also be manageable and effective. By using a structured video-based format (ConVOEs-Concurrent Video-Based Oral Exams), hundreds of students were assessed at once. Instructors could still review responses carefully using features like pause, rewind, and speed controls. Educators see them as a practical way to reduce students’ reliance on AI tools like ChatGPT.

The oral defense feature inside Edvisor is part of a scaffolded process. This means that the oral defense feature serves as a check for the written short answer or submission.

Step 1

Select ‘Quizzes’ on the left panel and click the ‘Create a Quiz’ button on the top right corner

Step 2

Generate Questions with AI based on the uploaded readings or enter your own questions. This can be any format of questions and requires the user (professor) to define the difficulty, mastery level, and points for the quiz.

Step 3

Before closing this window, the professor can enable ‘Oral Defense’ by clicking the checkbox.

Step 4

Once the questions are ready, the professor needs to go into the Quizzes, select that quiz, and click the ‘Edit’ button to add the rubric for the oral defense submissions (this can be done individually for each question in the quiz). This rubric will require the teacher to set the following (it tells the AI how to grade an oral exam):

- Maximum number of attempts

- Enable or disable time pressure so that students can see a timer as soon as they submit their written answer, so that they won’t have time to prepare

- Recording length

What the Student Sees

- Once the student submits a response to the quiz question, they will see a pop-up box that notifies them to complete the follow-up question before moving on to the next.

- There will be some guidelines provided before the follow-up question shows up.

- The AI generates a custom follow-up question from the student’s submission so that it tests what the student is actually saying.

- Student clicks ‘Start Recording’ and records their answer. If they are satisfied with the answer, they can submit or delete the recording and re-record as per the limits set by the professor.

- As soon as the student submits, the AI then grades their response, tells them the score, and provides an explanation for their score.

Create an Oral Exam With AI Now

Besides AI detection tools and policy statements, higher education also needs assessment methods that test real understanding. Faculty seeking AI-resilient assessment do not need to choose between integrity and scalability. Edvisor implements interactive oral examination as part of a scaffolded assessment design, grounded in the same learning science literature cited above, and integrated into existing course workflows.

Citations

- Richter, S., & Dodd, P. (2025, October 2). The case for oral exams in the age of AI. University of Auckland.

- Gecker, J. (2026, March 25). Perfect homework, blank stares: Colleges are turning to oral exams to combat AI. NBC Connecticut.

- Sotiriadou, P., Logan, D., Daly, A., & Guest, R. (2020). The role of authentic assessment to preserve academic integrity and promote skill development and employability. Studies in Higher Education, 45(11), 2132–2148. https://doi.org/10.1080/03075079.2019.1582015

- Ipeirotis, P., & Rizakos, K. (2026). Scalable and personalized oral assessments using voice AI. arXiv. https://doi.org/10.48550/arXiv.2603.18221

- Droulers, M., Krautloher, A., & Shaeri, S. (2026). Interactive oral assessment: co-existence of formative and summative purposes. Teaching in Higher Education, 31(1), 67–85. https://doi.org/10.1080/13562517.2025.2549945

- Barba, Lorena A., and Laura Stegner. "The Conversational Exam: A Scalable Assessment Design for the AI Era." arXiv preprint arXiv:2601.10691 (2026).

- Davey, S. K., Birbeck, D., Nallaya, S., Sallows, G., & Della Vedova, C. B. (2025). Utilising one-on-one interactive oral assessments as the major final assessment within a bioscience course. Assessment & Evaluation in Higher Education, 50(7), 1154–1171. https://doi.org/10.1080/02602938.2025.2502577

- Hartmann, C. (2025). Oral Exams for a Generative AI World: Managing Concerns and Logistics for Undergraduate Humanities Instruction. College Teaching, 1–10. https://doi.org/10.1080/87567555.2025.2558563

Frequently Asked Questions

What Are Examples of Oral Exams?

Common formats include thesis defenses, clinical case presentations in medical and nursing education, language proficiency interviews, viva voce examinations in graduate programs, and conversational follow-ups to written submissions

What Are the 4 Types of Assessment?

The four main types of assessments are diagnostic, formative, interim and summative. They apply at different stages of instruction to understand how much a student has learned. It is used to inform instruction strategies and measure achievement.

What Is an Oral Exam in College?

An oral exam is a 10 to 15-minute test that examines how deeply a student understands the material. These answers are delivered directly in front of the instructor or panel, so the student can not take dishonest shortcuts. Instructors also ask follow-up questions in many cases.

What Is the Format of an Oral Exam?

Questions in an oral exam can be preplanned or asked during the conversation. The student needs to show their knowledge, response to scenarios, and articulation.

Common formats include:

- Structured interviews

- Clinical simulations

- Presentations

- Conversational Q&A sessions

Is an Oral Exam Harder Than a Written One?

Students rated oral exams as slightly easier than written exams on average. The average difficulty score was 2.75 for oral exams, compared with 3.00 for written exams. This difference was statistically significant.

(Kelly SP, Weiner SG, Anderson PD, Irish J, Ciottone G, Pini R, Grifoni S, Rosen P, Ban KM. Learner perception of oral and written examinations in an international medical training program. Int J Emerg Med. 2010 Feb 5;3(1):21-6. doi: 10.1007/s12245-009-0147-2. PMID: 20414377; PMCID: PMC2850976.)